It is believed that the term “dark matter” (“matière obscure”) first appeared in 1906 in the works of the French mathematician Henri Poincaré, who described the strange features of the distribution of the rotation speeds of stars around the center of our Galaxy. Now, 117 years since then, scientists still cannot say what this mysterious matter is and where it came from — we only know that its total mass in our universe is at least five times greater than that of “ordinary” matter and it plays a huge role in the evolution of the universe. However, this only strengthens researchers’ resolution to unravel this mystery.

Astronomy is the science of observing the movement of celestial bodies. Humanity has been interested in astronomy since its inception. During the Renaissance, when the reason for the movement of the planets was yet unknown, there were disputes about the center around which they revolve — the Earth or the Sun. Galileo Galilei’s invention of the telescope in 1610 provided more arguments in favor of a heliocentric world system. Tycho Brahe’s large array of planetary observations allowed his student Johann Kepler to deduce the three laws of motion of celestial bodies between 1609 and 1619. As late as the end of the 17th century, basing on these works, Isaac Newton formulated the universal law of gravitation to explain the reason for such a movement of the planets.

Almost 100 years after that, in 1781, William Herschel discovered a new planet: Uranus. Over the years of observation of this planet, it became evident that its movement differed from the calculated one: an unknown mass sometimes slowed it down, and then accelerated it. Nature threw down a new challenge to scientists. This anomaly was explained by the influence of another planet, as yet unknown, which led to the discovery of Neptune in 1846, actually by calculation. After scrutinizing the point with calculated coordinates by a telescope, a new planet was indeed found near it.

A similar situation was with Mercury — its movement also differed from the calculated one. By analogy, they hoped to find a planet between the Sun and Mercury, which should cause disturbances in the movement of the latter. The astronomers even came up with the name “Volcano” for it, but they searched in vain. This problem was solved by “upgrading” Newton’s theory of gravitation with Einstein’s General Theory of Relativity in 1915. After that, the scientist applied the new theory to the universe as a whole. Solving the equations of this theory produced an ever changing universe. Before that, like most scientists, Einstein believed that our world is generally unchanged in time, so he added a coefficient to the equation of the General Theory of Relativity, which was supposed to ensure the static nature of the universe. However, Alexander Friedman showed that even with this coefficient, the universe will expand or contract.

Discovery of the “dark component”

In the 1930s, the American astronomer Fritz Zwicky observed clusters of galaxies and found that the total mass of visible objects in such clusters is much smaller than what can be calculated from the speed of their movement. The difference in mass was up to a hundred times. This led the scientist to believe that there must be a lot of invisible mass. For several decades, Vera Rubin studied the rotation of individual galaxies. Most of their stars are in the region near the core, so almost all of the galactic mass should be concentrated there. According to Newtonian mechanics, the speed of rotation of objects should decrease with distance from the nucleus. But the observations provided a completely different picture: the linear velocity of the stars from the middle to the visible edges of the galactic disks is practically the same, exceeding the calculated value. In this study, the ratio of the masses of the visible objects and the mass calculated from the rotation differed by about an order of magnitude.

Astronomers again faced a problem: the speed of movement of the observed bodies differed from the theoretically predicted one. The solution to this question was similar: there must have been something else unnoticed (or the whole theory turned out to be wrong). They started with the search for an invisible mass, which was called dark matter. Initially, it was supposed that it consisted only of miniature black holes of solar masses and neutron stars, brown dwarfs, lonely planets wandering through galaxies independently of any luminaries, RAMBOs (Robust Associations of Massive Barionic Objects), dust particles and gas and, finally, neutrinos. MACHOs and RAMBOs are massive enough bodies, but they are too compact and emit little light (or other radiation) to be observed. Since such objects manifest themselves through gravitational interaction, affecting the motion of visible bodies, an effective way to detect them is gravitational lensing, which is still used today. But it quickly became evident that the mass of these objects is not enough to solve this problem.

New tools

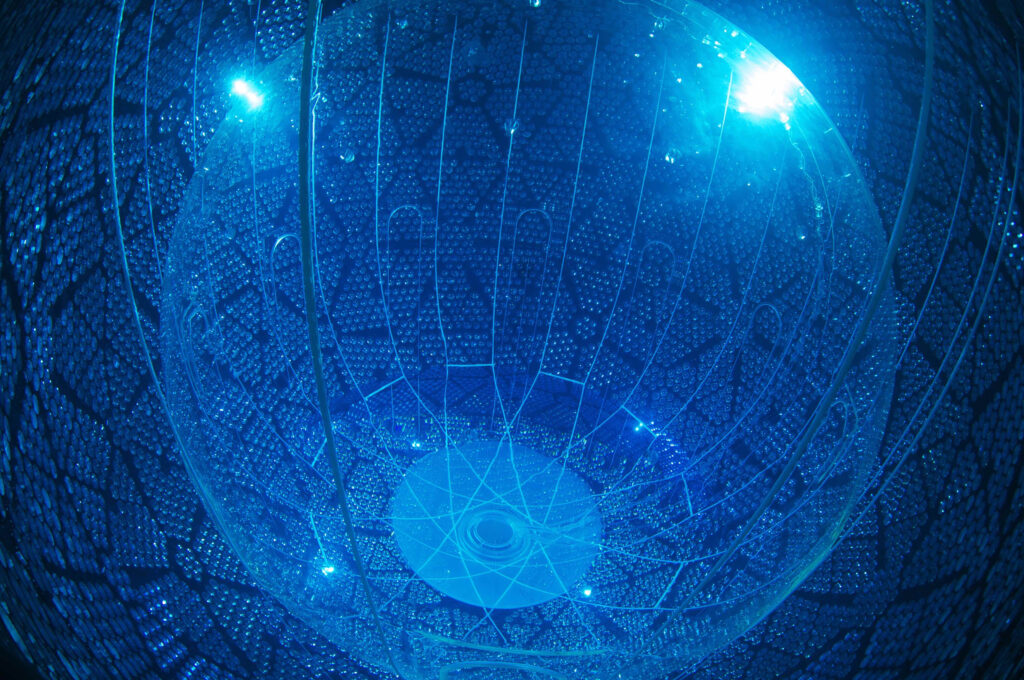

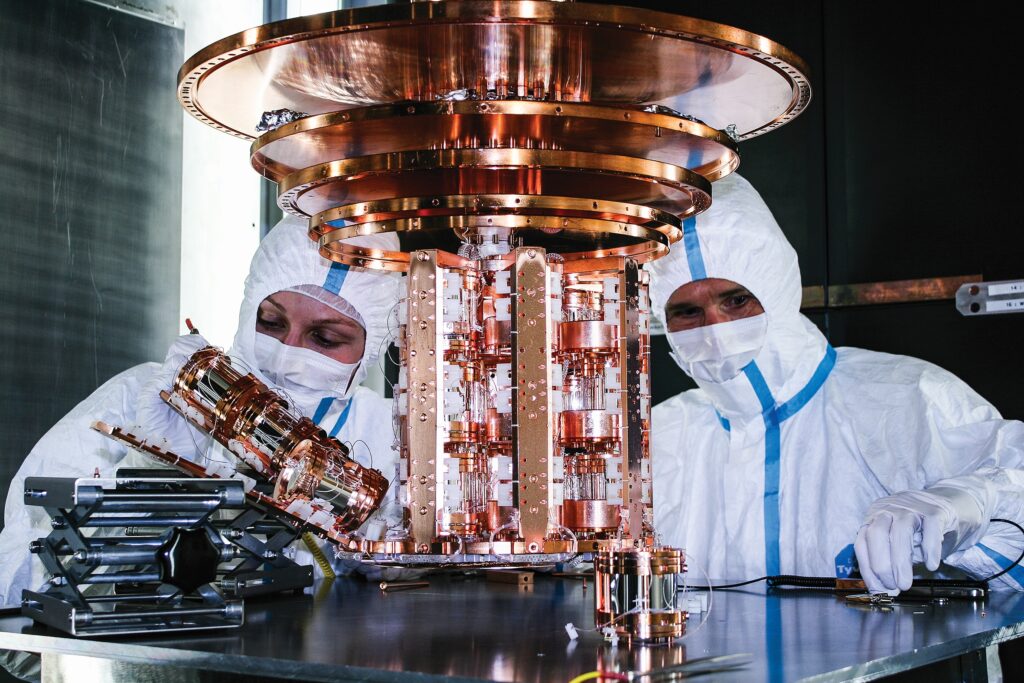

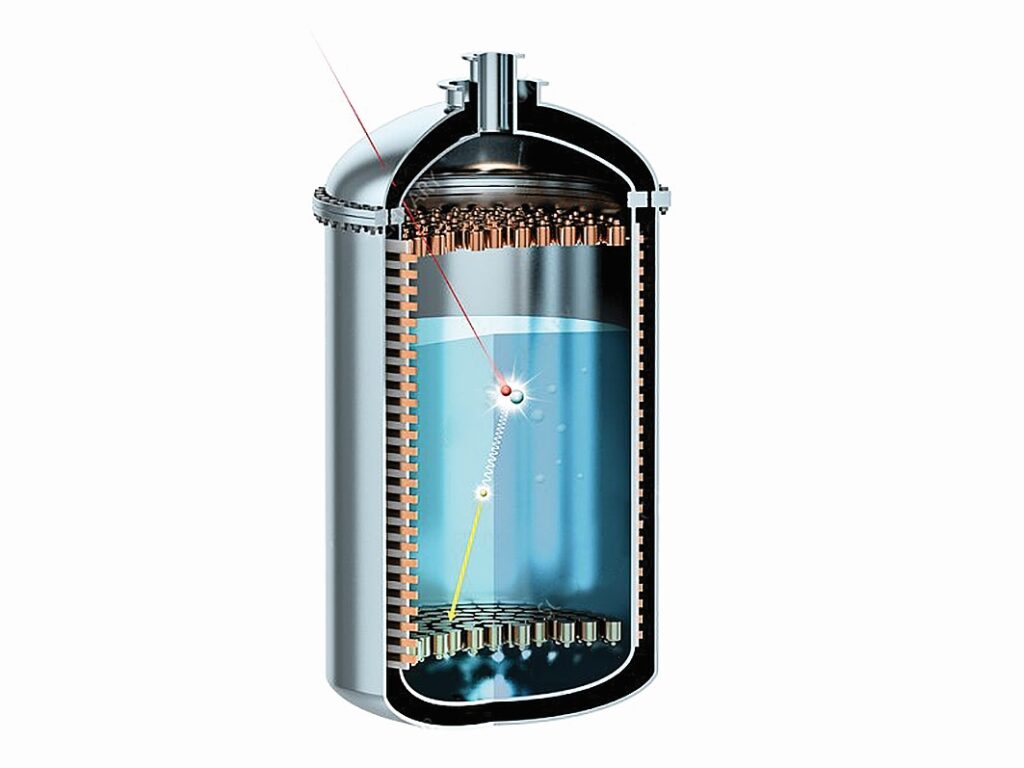

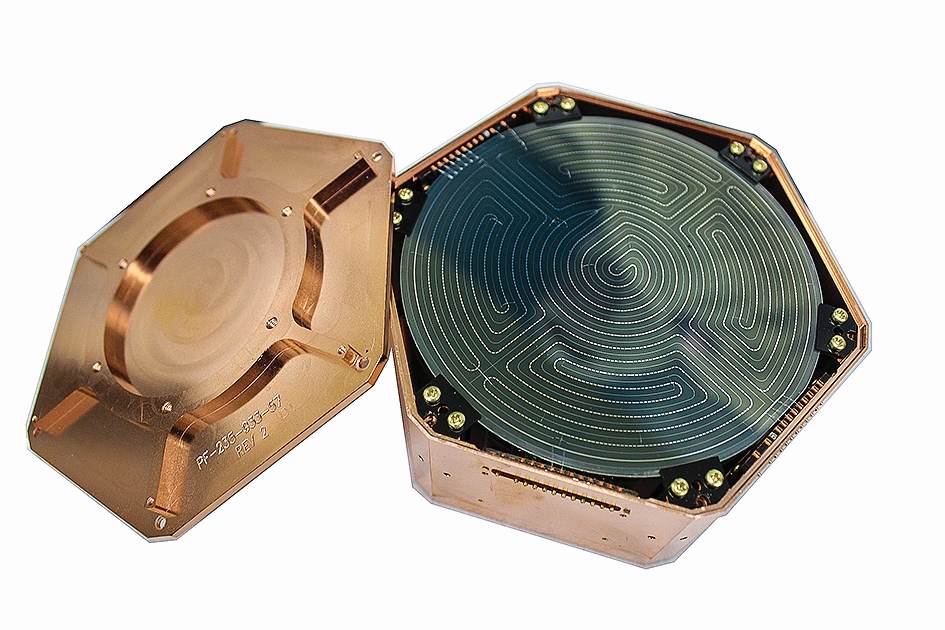

After that, focus was shifted toward elementary particles. And so much so that a separate class of WIMPs (Weakly Interacting Massive Particles) was singled out. Of the four fundamental interactions (weak nuclear, strong nuclear, electromagnetic and gravitational), they participate only in the first and last. Of the real particles with such qualities, only neutrinos were known to science at that time, but the question of their massiveness remained unclear: do they have a rest mass or are they “massless” like photons. To solve this problem, neutrino observatories were built to directly detect these elusive particles deep underground. In 1998, scientists discovered that neutrinos can transform into different types, which means that they do have mass. Although they are staggeringly numerous (tens of billions of neutrinos formed in the interior of the Sun fly through your fingernail every second), their mass is too small. This upset scientists, but did not stop the search in this direction. Since the beginning of the 21st century, additional underground observatories have been built in different parts of the planet for the direct detection of WIMPs. Just one Gran Sasso laboratory in Italy is conducting several experiments on the direct detection of “dark matter” particles (DAMA/NaI, DAMA/LIBRA, CRESST, XENON and others). The corresponding equipment is placed deep underground to shield the extremely sensitive devices, detecting interaction of mysterious particles with matter, from other cosmic rays (primarily electrons and protons). Heavy inert gas — xenon — is liquefied and several tons of it are poured into a container near which the detectors are installed. When a WIMP interacts with a nucleus of xenon, energy is released in the form of a photon, which is registered by a detector. In some experiments, instead of liquefied inert gas, scintillation crystals (CRESST, CDMS, DAMA) are used in much smaller quantities, but also cooled to extremely low temperatures to ensure high sensitivity.

The main goal of these experiments (that is, the direct detection of WIMPs) has not yet been achieved, but they cannot be called failures either, because they helped to make many accompanying discoveries — for example, double electron capture, neutrinoless β-decay, observation of the α-decay of tungsten-180, the half-life of which is estimated at 1.8×1018 years (for comparison: the age of the universe does not exceed 1.4×1010 years), engineering records such as cooling down to millikelvins, achieving superconductivity, fantastic sensitivity to a single photon. The discoveries made allowed us to outline a new limit for the masses of WIMPs.

Along with experiments on direct detection, attempts were made to indirectly detect dark matter particles, based on the fact that these particles can annihilate each other or simply decay and form high-energy radiation (X, γ). Fermi, XMM-Newton, and Chandra space telescopes are engaged in this task. Their goal is to detect excess radiation from those regions of the universe where a lot of mass is concentrated (galactic nuclei and galactic clusters). In addition, the Large Hadron Collider is also involved in the search reproducing the conditions in which dark matter might be born.

At the end of the 20th century, two groups of scientists observed distant galaxies. According to Hubble’s law, the farther the objects of observation are located, the faster they should be moving away from us due to the expansion of the universe. In 1998 and 1999, the results of studies were published showing that the actual movement of galaxies does not follow this law. They tried to explain this by saying that our world is expanding, but since it contains a lot of mass and even more invisible mass, its expansion should be slowed down by gravitational forces. But the results of observations indicated the opposite: the universe is expanding with acceleration.

The leaders of the scientific groups involved in the discovery — Saul Perlmutter and Brian Schmidt with Adam Riess — received the Nobel Prize for it in 2011. The reason for the accelerated expansion was called dark energy. But what exactly causes this behavior of the universe is still unknown. Again, to explain this phenomenon, it is proposed to introduce a new field or to modify the theory of gravity. Since the Einstein-Hilbert equations from the General Theory of Relativity (perfectly predicting reproducible results of observations, as well as Newtonian gravity does within the boundaries of the Solar System, except for Mercury) are used to describe the universe, some scientist are trying to modify them. But the hypotheses about the existence of a so far undiscovered physical field are more popular.

Theoretical explanations

Here it is appropriate to mention Einstein’s unsuccessful attempt to “stabilize” the universe using an additional coefficient in his formula. This idea unexpectedly revived in describing the accelerated expansion of the universe. Now it is called Λ-term or the cosmological constant and is interpreted as vacuum energy, which is present everywhere and “expands” space. More space in the universe means more vacuum energy means ever more space.

The second most popular hypothesis introduces a physical field that fills the entire space and is described as an ideal incompressible fluid. The pressure of this fluid (which causes expansion) is related to its density, which is included in the GTR equation as a single parameter — the so-called density parameter ω — which can take any real values. But physics imposes certain restrictions on it: since the expansion occurs with acceleration, this value must be less than –⅓, and at ω–1 this field becomes identical to the cosmological constant. In the range –1<ω–⅓, such a field is called “quintessence”, while ω<–1 is called a “phantom”. The difference between these entities is as big as the future of the universe. At its quintessence side, the expansion will someday be replaced by compression (that is, the universe will collapse and “pulsate”). With “phantom” dark energy, the accelerated expansion will continue to accelerate and then lead to the Big Rip — it will affect ever smaller scales, eventually causing even stable nuclei and subnuclear particles to decay. According to the latest observations, the value of this parameter is close to -1 (and therefore the expansion of the universe will take place forever), so the cosmological constant is the most promising candidate for the role of dark energy. In addition to describing the future, the notion of dark energy almost certainly can elucidate the universe’s early past. It can explain the phenomenon of cosmological inflation — the instantaneous and fantastic expansion of space almost immediately after the Big Bang.

In addition, it explains the isotropy of the relict radiation studied by the COBE, WMAP, and Planck instruments. There is a hypothesis (based on quantum mechanics) that dark energy arises due to the spontaneous formation and annihilation of pairs of virtual particles and antiparticles. But the value of the cosmological constant for these events, according to quantum mechanical calculations, exceeds the observed one by more than a googol times (approximately 10120 times).

Although dark matter and dark energy are inherently opposites, there have been repeated attempts to describe these phenomena within a unified approach. A fairly famous attempt, dated 2018, is found in a paper by British scientist Jamie Farnes, where he uses the concept of a liquid with negative mass to explain dark entities. In addition, he suggested that negative mass is continuously being created in the universe. Each of these ideas separately (negative mass and the continuous creation of mass) already arose in cosmology, but did not stand the test of observation. Here the author combined these two concepts.

Farns’ work was received ambiguous reaction of the scientific community. Criticism related to a large number of assumptions there — for example, the strange interaction of negative masses (if positive masses attract each other, then, by analogy with electric charges, negative masses should also be mutually attracted, while positive and negative masses — mutually repelled, but in the article it was the other way around: “+” and “-” are attracted, and “-” and “-” are repelled).

Prospects of research

It is worth understanding that dark matter and dark energy are like the legendary “42” by Douglas Adams: these concepts are used to describe specific observed phenomena (rapid rotation of stars in galaxies, galaxies in clusters, and accelerated expansion of the universe), the true cause of which still remains unknown. Nevertheless, we can make assumptions and predictions about these phenomena that will somehow correspond to other observations. Today, the universe is best described by the cosmological constant (Λ) in the role of dark energy and “cold” dark matter (CDM), the particles of which move at low speeds relative to the speed of light. Collectively, they are called the ΛCDM model.

As for dark matter, it is worth noting that now this term means hypothetical particles and by no means bodies consisting of “normal” baryonic matter (which includes protons and neutrons), and even less antimatter: dark matter particles can correspond to its own dark antimatter, and when they interact they will also annihilate, thus revealing themselves to observers who are keenly looking for it. The ΛCDM model of the universe, according to the available observational data, supposes about 68% dark energy, 27% dark matter, and the remaining 5% is the baryonic matter that makes up us, this journal and everything that our eyes can see. Better understanding of the invisible 95% of the universe requires more and better quality observations. In the near future, we will be helped in this by the James Webb Space Telescope (JWST), which is able to peek much deeper into the past of the Universe than its predecessor Hubble, and the terrestrial giant Vera Rubin LSST (Large Synoptic Survey Telescope) with an 8.5-meter mirror.